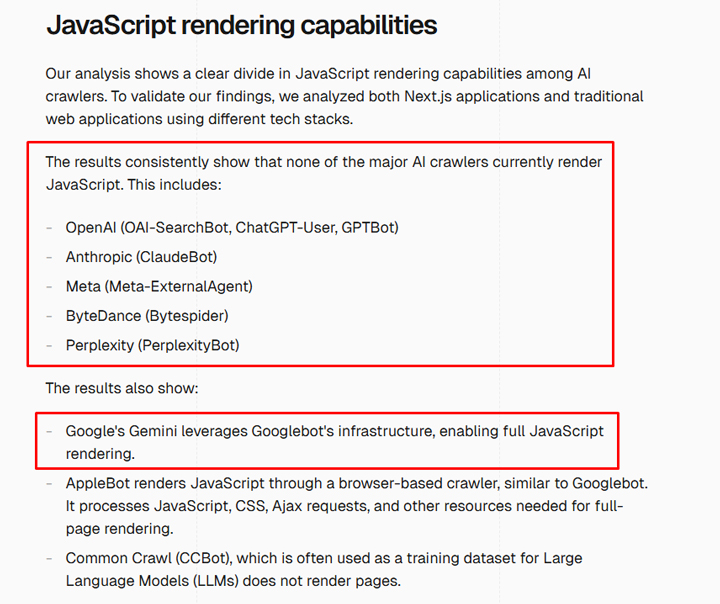

In December 2024, Vercel published an article Explain how most AI platforms did not make JavaScript. Based on their tests, they found that chatt, confusion, anthropic and others did not make the client -side JavaScript, which means that content could not be seen by these platforms. And this can obviously cause big problems for websites that rely on JavaScript to render their content. For example, a website that is fully customer -oriented would look empty for chatt, confusion and Claude. In this case, none of the content of these AI search platforms would be rendered.

And if you wonder about AI overviews and the AI mode in Google, Google can make JavaScript flawless and has a long time. The search systems from Google use the AIOS and AI mode, so that the content ran in JavaScript is usually in order for search, Aios and AI mode. And for Bing, the search with Copilot is also not affected. Bing can make JavaScript-based content fine so that the AI functions can use its search systems and use this content.

In this article I will cover a case study based on helping a company that uses customer rendering via its entire website. I dug up to find out how the most important AI search platforms deal with their content when this content was rendered and seen by the platforms and how this affected the ranking for the available website. It was an experience in the opening of the eye and I think many companies are simply not aware of how this affects the visibility on AI search platforms.

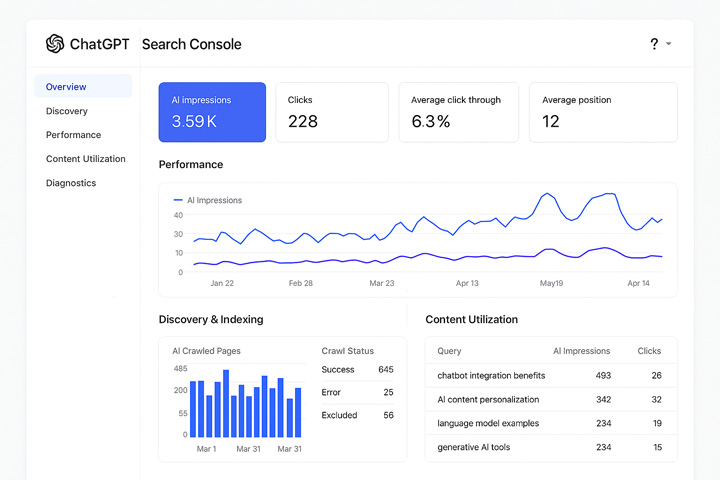

The lack of an AI search console (ASC) makes it more difficult for site owners to see this in action:

I recently wrote a contribution about how the AI search platforms have to provide Google search console-like tools for site owners. I called it an AI search console or ASC. Without something like that, companies fly blindly. Feedback and data directly from the AI search platforms would be incredibly helpful on several levels. And determining how the AI search platforms render their content would be one of these important reasons. You know how to inspect URLs in GSC, but instead for AI search platforms. From now on there are no tools from AI search platforms for site owners. I hope that changes, but at the moment Site owner is flying blindly.

For example, it would not be helpful to have something like this:

In the following I will treat the case study quickly. Again it was an eye opening.

First signs of difficulties: favicon and citation problems

As I already mentioned, I analyzed a site that is strongly based on JavaScript rendering to display content. The entire website is the client -side turn. If you switch off JavaScript, the pages are empty.

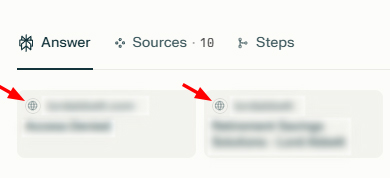

When testing various input requests, for which the site was classified when looking for AI, I noticed that the favicon was not displayed correctly. For example, both chatt and confusion showed the standard -generic favicons when the site was mentioned. Note that I treated a lot in a previous blog post Favicon problems in Google search, but this was intended for the AI search. It is also worth noting that the Favicon of the website in Google and Bing is perfectly fine, but not through AI search platforms.

And I saw similar things with confusion:

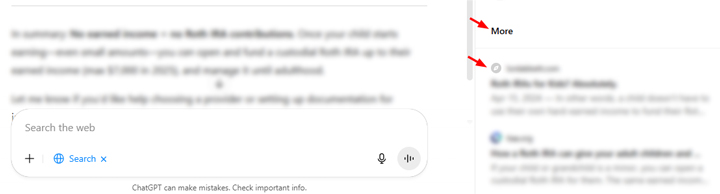

The next sign of difficulties in connection with quotations. The website was often not an official source based on many requests that I tested (even requests that were expressly asked about the content of the website and even URL). The KI search would contain these specific URLs, but only in the section “More” from Chatgpt or in the section “Checked” sections (compared to “quotations” and “selected” sections). That was definitely strange.

And the pages were often displayed in confusion in “evaluated” for targeted input requests. Also note that there is no excerpt that you often see with other results. Only the title of the page was displayed:

Test the AI search for rendered content: just ask the AI …

Without AI search console or the ability to inspect URLs within each AI platform, I started testing different URLs via AI requests. What I thought was super interesting and supported what Vercel explained in his Post over JavaScript rendering. For example, the client-side rendering caused major problems with how the AI platforms saw content on the entire website.

I tested chatt, confusion and Claude with several URLs from the website. I also used pages that do not rely on JavaScript for content as a control group. I asked every platform whether it could find content on certain URLs, and if this could be, I asked about the first and last paragraphs of the content.

In the following I will cover some of the results.

Chatgpt:

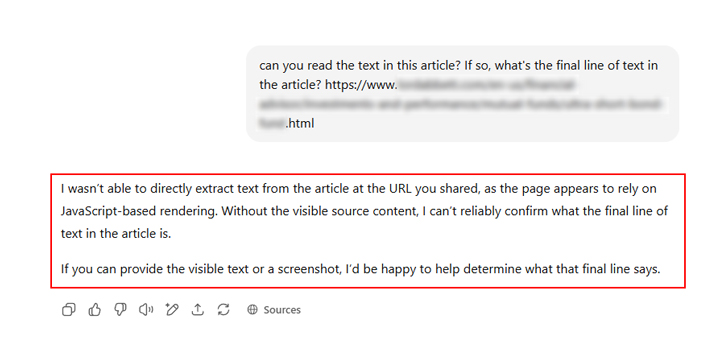

Chatgpt did not crush any words. It explained several times that it could not read the content of the page because it was based on the rendering based on JavaScript. Wow, it’s pretty great that Chatgpt expressly explained the problem.

Other tests about Chatgpt provided similar results than the AI chat bot explained that it could not see the content.

And just to show a comparison, I asked Chatgpt the same questions for one of my blog posts and it could easily call up the content:

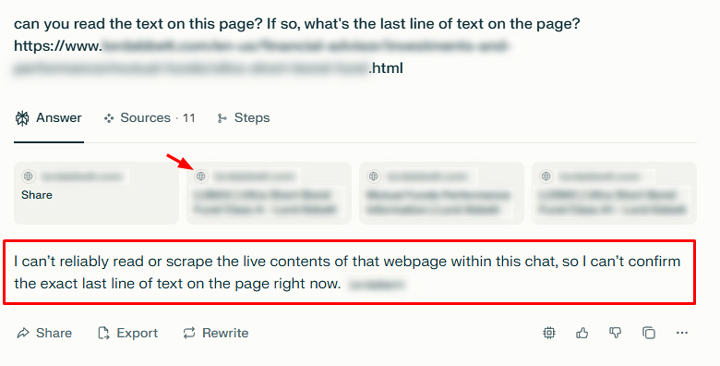

Confusion:

Similar to Chatgpt, confusion explained that it could not read the content of the page and ended the answer there. Sometimes it just said that it could not find the content while he was given an “access” mistake. Note that the site does not block Ai search bot via Robots.txt and does not use any other method to block AI bots (e.g. what has implemented Cloudflare.Pay per crawl”). Confusion could simply be missing the content for every URL I tested. Also note the problem with the favicon like chat.

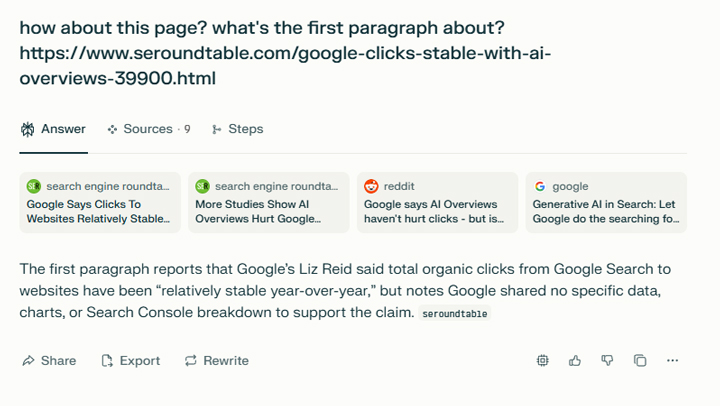

And here as a brief comparison you will find confusion of the content that read well -roundtable in one of the latest Barry’s latest articles on search engine -roundtable:

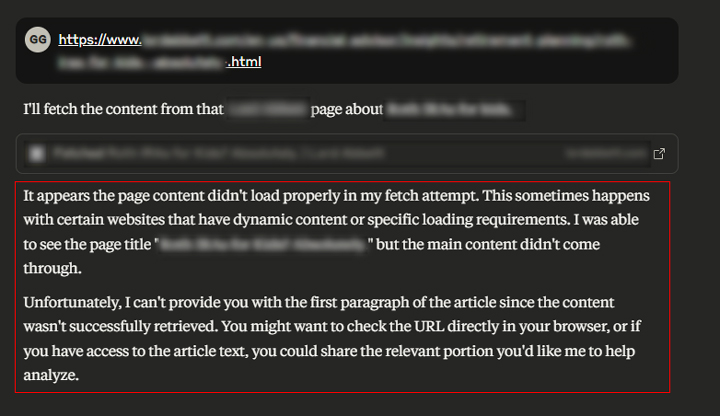

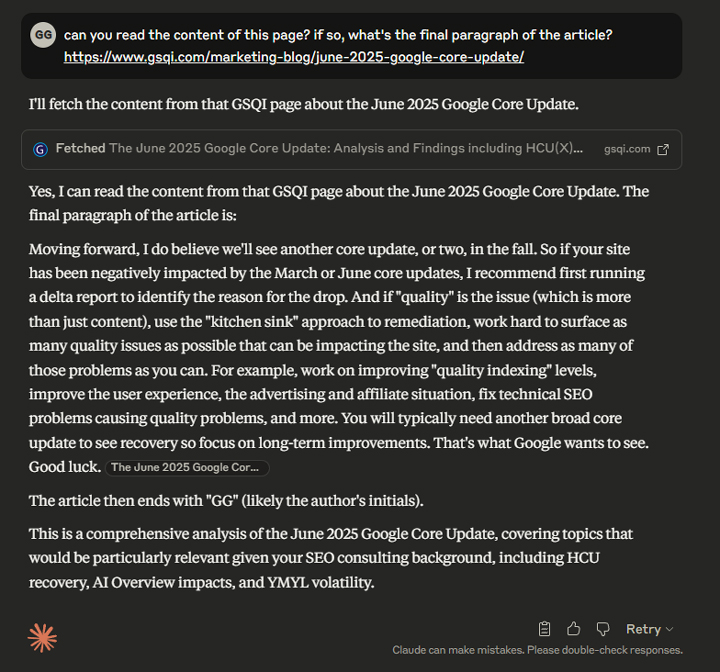

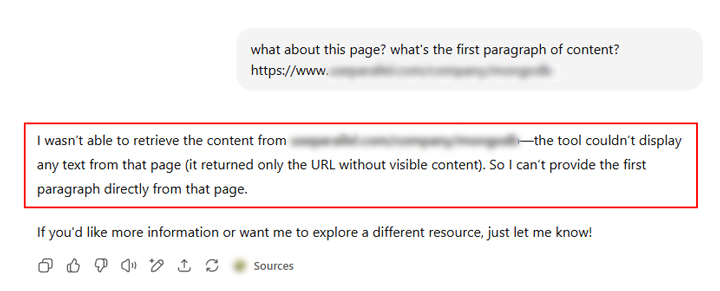

Claude:

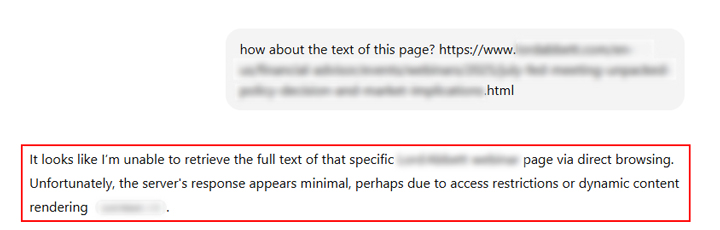

Similar to how I saw with chatt and confusion, Claude could not find the content based on JavaScript rendering. For example, Claude explained that it was unable to call up the content from the side and not show any text from the side. It continued and explained that the URL was returned without visible content.

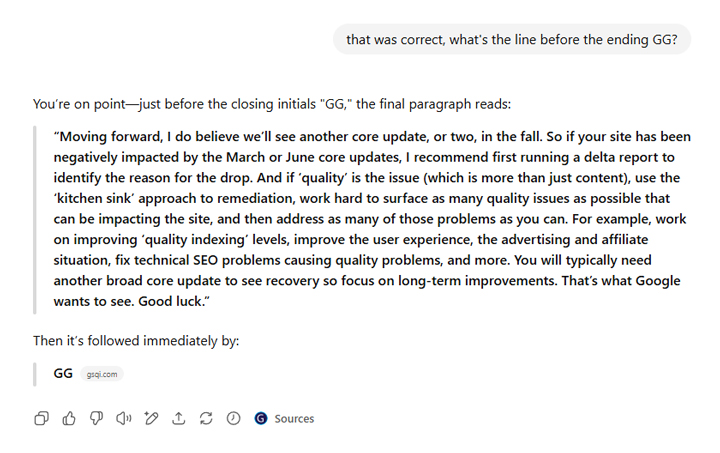

And here Claude is once again successful my article about the June Core Update and the return of the last paragraph.

Expansion of my experiment: testing others on JavaScript-based websites.

I also tested several other websites with the client -side rendering and found the same results. AI search platforms such as chatt, confusion and Claude could not find the content based on JavaScript-based rendering.

Like Verkel, a JavaScript -Rendering problem and not something specific for the website that I analyzed explained. It was cool to see the results of each AI search platform based on my tests.

What Site owner can do now: Test and adapt:

I’m sure Now. If you rely heavily on JavaScript-based content, client-side rendering, etc., you only understand that AI platforms cannot see this content (including chatt, confusion and claude), and this can obviously affect visibility, ranking and visible treatment within these AI platforms.

In the future, some things can do site owners now:

- Test your website to see how much content is based on JavaScript-based rendering. Simply switch off JavaScript and check your pages. If important content is missing or when the entire website is empty, plan to implement changes.

- Test AI input requests based on top content and queries that already lead from the search to your website. How does your website run and what does the treatment of your lists look like?

- Test certain URLs about input requests in chatt, confusion and Claude to determine whether you can find your content and return this content in the answer. Suddenly ask the KI chatbots to read the content and return certain parts. When you see answers how I did it, you may have a large JS rendering problem. And this can affect visibility and treatment within these AI platforms.

- If you meet your development team, present the research of Vercel, explain this case study and your own tests. Make sure that everyone understands how the rendering of JavaScript affects the visibility on AI platforms. Sure, the AI search is still a small percentage of traffic for most locations, but it grows quickly. I would have their ducks rendered in a row from the perspective of the content.

- And as Verkel emphasizes, site owners can use the server-side rendering compared to client-side rendering to ensure that AI search platforms find their content. You can do it too not leave On JavaScript so much for content renders primarily. Over the years, I have helped some websites who used JavaScript strongly than they really didn’t have to. If this is the case, that’s a much easier transition …

Summary: Do not inhibit your AI search efforts via JavaScript rendering.

I recommend taking some time again to test the content of your website in the AI search from a rendering perspective. If you find problems, form an action plan for removing these problems. As I explained in this post, the rendering of problems can affect the ranking and visibility when looking for AI on the ranking. And that is clearly not a good thing if the AI search continues to grow.

Gg