Every reliable tactic that marketers love today, from video content to email marketing to blogging, was once a new experiment that early adopters tested and developed. Developing new marketing strategies is fundamental to marketing. It helps brands reach new customers and collect data that helps make smarter business decisions.

Although experimentation is nothing new, digital marketing offers brands more flexibility and potential. Let’s look at the types of experiments, what metrics to track, and how to design experiments across all marketing channels for maximum success.

Table of contents

What are marketing experiments and how do they work?

Marketing experiments are controlled changes to a marketing message or campaign to improve reach or conversion rates. These tests can be a small, single optimization or a campaign-wide experiment. Successful marketing experiments evaluate both quantitative data and qualitative factors, and campaign results feed directly into the next iteration of marketing materials.

Experiments are part of step four in Loop marketing Cycle: evolve in real time. Here are quick examples of marketing experiments that complement the cycle:

|

Experiment example |

How it feeds the marketing cycle |

|

Change the color of the CTA button on a landing page |

Measures immediate impact on click-through rate (CTR); The winning version is then iterated to improve conversion rates |

|

Test UGC vs. brand photography in paid ads |

Uses engagement and conversion data to develop an ad strategy based on audience response |

|

Subject lines for A/B testing emails |

Evaluates open rates, engagement rates, and quality responses to refine future messages |

The elements every marketing experiment needs

Before you spend marketing budget on an experiment, make sure it has everything it needs to be successful: a solid foundation, clear test factors, predetermined success metrics and a consciously chosen framework.

The basics

Marketing experiments consist of some key factors such as a specific hypothesis, a theme, and dependent and independent variables.

- Measurable hypothesis (expected result): A clear, testable prediction.

- Topics: Who is exposed to the experiment?

- Independent variable: The element that marketers intentionally change.

- Dependent variable: The measured result.

Here’s an example of what that looks like: A local coffee shop is running a Facebook advertising campaign targeting people who have liked the page (topics). The owners estimate that offering a 10% discount promotion on rainy days (independent variable) will increase Facebook ad conversion rates by 20% (dependent variable), compared to recurring ads that do not change with the weather.

Test factors

Marketing experiments require multiple testing factors, such as control vs. variant, randomization, and experiment duration.

- Control: The original version of a message, ad, or experience (base version).

- Variant: The version containing the intended change being tested (e.g. new text, creative materials, or promotions).

- Randomization: The process by which people are randomly assigned to see either the control or the variant.

- Duration: The length of the experiment depends on how much data is required to reliably compare the results.

Success metrics

Measuring the success of a marketing experiment is more nuanced than relying on a single metric. Both primary and secondary metrics need to be considered:

- Primary Metric: The single desired outcome (such as lead generation or sales)

- Secondary metrics: Supporting results that provide additional context (like engagement or time on page)

Note that data alone does not provide a complete statement about the success of an experiment (I’ll tell you more about this below).

A/B vs. multivariate marketing experiments

Marketing experiments follow three common frameworks: A/B testing, multivariate testing and holdout testing. Each evaluates different elements of a marketing campaign and shares their own valuable insights.

|

What it does |

How it feeds the marketing cycle |

|

|

A/B testing |

Compares a specific change to the control group |

Insights are easy to interpret and can be immediately applied to improve future iterations |

|

Multivariate changes |

Compares multiple variables at once |

The results are more difficult to interpret, but can provide insights that contribute to the holistic development of marketing materials |

|

Holdout tests |

Compares viewers who are exposed to a campaign versus those who are intentionally not exposed to it to measure incremental impact |

Identifies whether marketing exposure results in a result that would not otherwise have occurred |

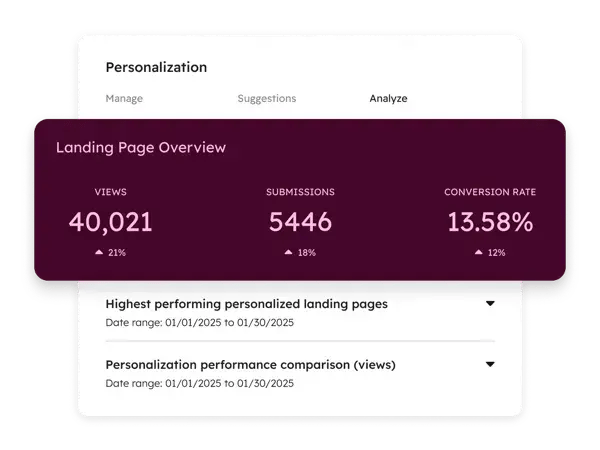

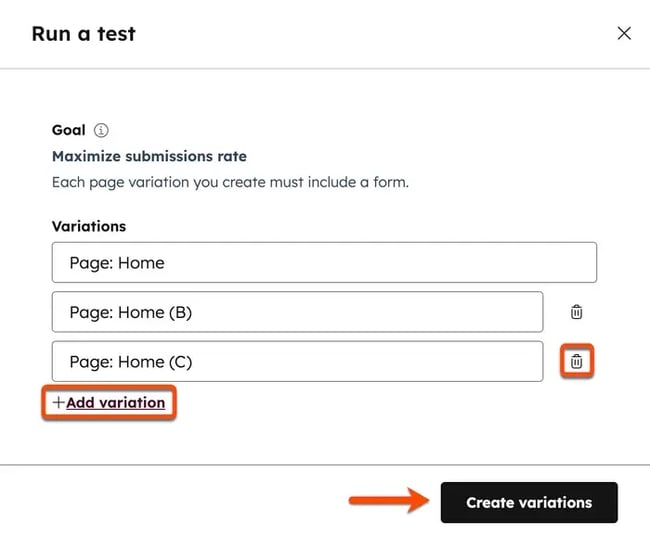

Both A/B testing and multivariate testing are built into marketing software like HubSpot Marketing Hub. Users can quickly test variations of content and see how they perform:

This type of adaptive testing allows marketers to run multiple experiments simultaneously, allowing for up to five variations at once:

After you understand the different frameworks, follow the five steps below to start your experiment.

Steps for designing and running marketing experiments

Choose the right question and success metric.

The first step in designing a marketing experiment is to formulate the question (hypothesis) to be tested and tie it to a specific success metric.

Below are some sample question formulas and applications. Note that the questions asked are all clear and data-driven. This is important because unclear hypotheses increase the risk of interpretation bias and spurious correlations.

|

Interrogative formulas |

Examples |

|

Will (Change in X) increase (Y) (metric) for (audience/marketing asset)? |

will Moving the email opt-in up increase Leads generated by 20% on mine most read blog post? |

|

Will (Change in X) decrease (Y) (metric) for (audience/marketing asset)? |

will Removing steps at checkout reduce abandoned carts from 5% for digital products? |

|

Will (changing X) shorten the time to (desired action) for (asset)? |

will Adding social proof to our email nurturing sequence shorten time buy for ours Software demos? |

Where should I start? I recommend you experiment with a low-performing site first. Find an ad, landing page, or website page with low conversion rates and develop a hypothesis for improvement.

Choose a test type and define the variable.

After marketers choose the right question for their experiment, they need to choose a testing framework. Choosing the wrong type of test or testing too many variables at once can make results difficult to interpret and act on.

Although there are many different types of marketing tests, let’s look at three common test types, the variables they measure, and common examples.

|

Test Types |

Examples |

variable |

|

AWAY |

Email subject lines, sales page CTAs, button color |

An isolated element, e.g. E.g. copy, placement or color |

|

Multivariate |

Test multiple page elements at once, such as headings, layout, and images |

Tested multiple items simultaneously to measure interaction effects |

|

Hold back |

Measure the real impact of ads, lifecycle emails or always-on campaigns |

Exposure to No Exposure to a Campaign or Marketing Materials |

Where should I start? I recommend an A/B test. It is one of the most effective marketing experiments because it provides instant clarity on a single variable. Use HubSpots free A/B testing kit to quickly repeat experiments.

Estimate the sample and set a stopping rule.

Marketing experiments need a clear end point (stopping rule) that signals when the experiment has collected enough data (sample) to prove or disprove the hypothesis. The breakpoint should be objective and predefined before starting an experiment.

Some common stopping points for marketing experiments are:

|

Possible stopping point |

What determines it |

Example |

|

Traffic/sample size |

When enough data has been collected to reliably compare results between the control group and the experiment |

The experiment ends after 15,000 viewers have experiential marketing materials |

|

Length of time |

Time frame of the experiment |

The experiment ends after 14 days |

|

KPIs met |

If the hypothesis was supported by the success metric |

The hypothesis of a 5% improvement in click rate was confirmed |

|

budget |

How much marketing spend should be invested? |

The experiment ends once ad spend reaches $1,000 |

|

Negative performance |

If the variant does extreme damage |

A social media experiment ends when it results in a 2% lower engagement rate for the entire account |

|

Data quality issue |

Whether the results can be trusted |

Errors or mapping problems were detected |

|

External event |

If an external force influenced the experimental results |

A national emergency dominates the news cycle and social media promotional materials are paused |

Create, ensure quality and launch.

The design and execution of experiments has a major influence on the results. Building an experiment with a focus on quality assurance protects marketing efforts and expenses from chasing inconclusive or biased experimental results.

During the creation, quality assurance, and launch phases of an experiment, consider the following checks and balances:

Build:

- Control and variant are implemented correctly.

- Only the intended variable is different.

Quality assurance:

- Tracking events are triggered correctly.

- The randomization works as expected.

start:

- Test launches during normal traffic patterns.

- Tracking mechanisms (UTM codes, pixels, analytics) record data correctly.

Below I provide precise tool recommendations for conducting marketing experiments.

Analyze, document and decide on the rollout.

Analysis is an essential part of the experiential marketing process. Determining the success or failure of marketing efforts helps make the data collected actionable while driving the development of future experiments.

Marketing teams should ask objective, investigative questions to analyze, document, and determine experiment rollout. Here is a checklist:

Analyze:

- Did the experiment reach its predefined stopping rule?

- Was enough data collected to evaluate the experiment?

- Did the variant outperform the control on the primary metric?

- Could external factors (seasonality, campaigns, news events) have influenced the results?

Document:

- What was the original hypothesis and was it supported by the data?

- What exact variable was changed?

- What unexpected results or behaviors occurred?

- Which assumptions were confirmed or invalidated?

Roll out:

- Should the winning variant be repeated or retested?

- Is this finding strong enough to apply to other channels or assets?

- Does this result justify expanding to 100% of traffic?

- Are there risks if this change becomes widespread?

Common pitfalls that cause marketing experiments to fail

Marketing experiments can be sabotaged by common pitfalls such as seasonal effects, skipping a qualitative review, choosing the wrong duration, and running multiple experiments at once. Heed these warnings.

Skip qualitative review

While data is important for objectively assessing the success of a marketing experiment, human review of qualitative factors is essential. Scott Queensenior product strategist SegMetricsrecommended that marketers view marketing experiments from both quantitative and qualitative perspectives.

Using lead generation as an example, Queen shared that “you have to think about it in two ways: the pure numbers… And then you have to analyze, are they the right people?”

A lead generation campaign that resulted in 1,000 new email sign-ups may look successful, but what if none of those customers lived in the shipping area of an e-commerce company? Quantity alone cannot determine the success of a marketing experiment.

Choose the wrong duration

The length of marketing experiments impacts marketing spend and the amount of data collected. Finding the right length for a marketing experiment is a balancing act.

How long should brands run a marketing experiment? That depends on the channel.

“Some of your marketing tactics are somewhat immediate, I would say you look at them weekly,” Queen shared. For other desired results, such as For example, increasing organic website traffic through an SEO experiment can take months to collect enough data.

Seasonal effects are not taken into account

Tests conducted at atypical times (holidays, national emergencies, elections) may be biased due to external influences rather than the experiment itself.

This shift comes from both viewers and algorithms. For example, as a Pinterest marketer, I know that I should avoid publishing ever-fresh content from Thanksgiving through Christmas because seasonal content is so heavily favored by the Pinterest algorithm. This offset is enforced by the algorithm.

During times of crisis, users’ attention or even time spent on social media may decrease. If possible, avoid conducting experiments during these periods to reduce the risk of results being attributed to factors external to the test.

Run multiple experiments at the same time

Running multiple tests at the same time increases the risk of incorrect mapping. Attribution is already a challenge in digital marketing because many touchpoints (e.g. influencer mentions or AI-generated overviews) are difficult to capture.

When possible, experiments can be performed sequentially or parallel tests can be coordinated to ensure that the results can be reliably interpreted. For example, changing a single variable on the homepage and testing these versions in parallel:

Tools for planning, executing and analyzing marketing experiments

Consider the following tools to plan and execute your marketing efforts.

Marketing Hub

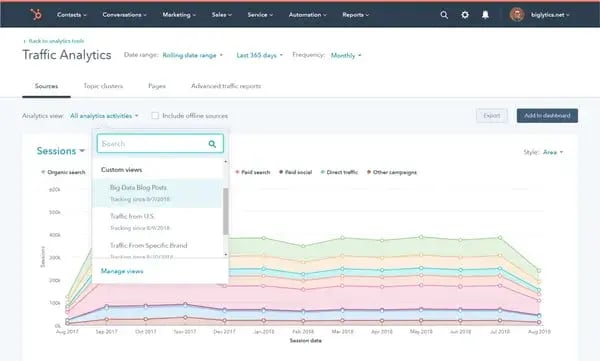

HubSpot’s Marketing Hub is a comprehensive platform that combines data from social media, a company’s website, CRM, search engines, and paid ads into one easy-to-use dashboard. Easily filter data by asset titles, type, interaction type, interaction source and campaigns.

Price: Paid plans start at $10/month

Standout features include:

- Ad retargeting and audience management: Create and test retargeting campaigns in experimental groups.

- Advanced Personalization: Create and test personalized content experiences based on CRM data, lifecycle stage, or behavior.

- Smart CRM integration: Run experiments with consistently defined audiences by leveraging CRM data across teams.

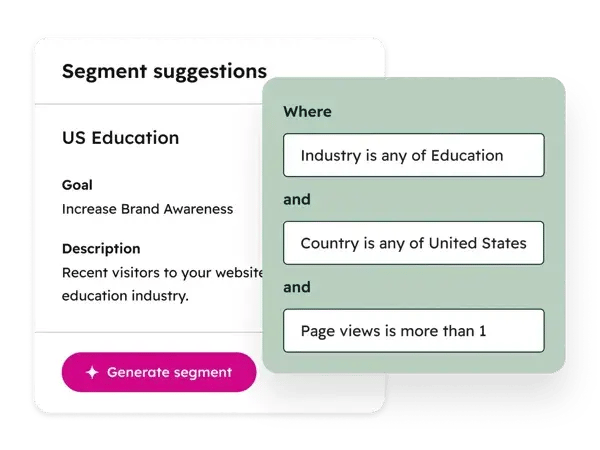

- AI-powered segmentation: Use AI segment suggestions to define and refine audiences for more relevant experiments.

- Travel mapping: Analyze customer journey data to find where visitors are most likely to convert.

- A/B and adaptive testing: Test variations of landing pages, emails, and CTAs to see what drives more engagement and conversions.

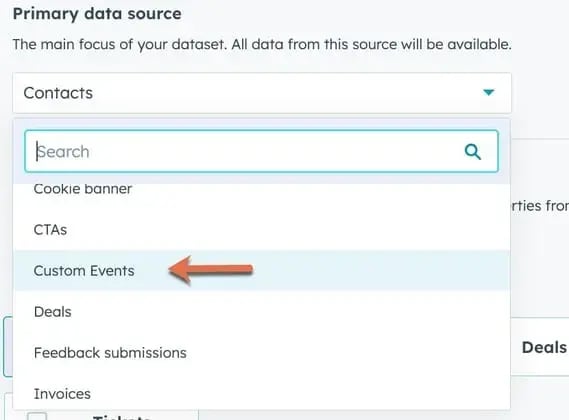

- Behavioral event tracking: Track and report on specific user actions to measure the impact of experiments beyond superficial metrics.

- Advanced Marketing Reports: Analyze experiment results across channels and funnel stages in unified dashboards.

- SEO and content performance tracking: Measure how content and SEO experiments impact organic traffic, engagement, and conversions.

What we like: HubSpot’s Marketing Hub makes data as actionable as possible, enabling easy decision-making and better understanding for all members of the marketing team. I like that the built-in AI capabilities work with you rather than taking over entire processes, giving you full control over your own experiments while leveraging the insights that AI brings.

SegMetrics

SegMetrics is a marketing attribution and reporting tool designed to help marketers understand how experiments impact sales. It connects marketing touchpoints across the funnel to downstream outcomes, making it easier to validate whether experiments result in qualified leads, customers, and lifetime value.

Price: Starting at $57/month

Key features include:

- Sales-based attribution

- Lifecycle and funnel reporting

- Campaign and channel mapping

- CRM and marketing tool integrations

- Lead quality analysis

What we like: The features of the subscription model. Many reporting tools struggle to measure results for companies that drive recurring subscription purchases. On a demo call with Queen, he showed me SegMetrics’ pre-built tools that marketers can use to figure out which experiments will extend customer lifetime value (LTV) for subscription-based businesses.

Google Analytics 4

Google Analytics 4 (GA4) measures countless user interactions and events. It provides a notoriously (or perhaps infamously) overwhelming amount of data, but when it comes to marketing experiments, GA4 helps marketers with funnel analysis, traffic segmentation, and validation of experiments across all channels.

Price: Free

GA4 features related to marketing experiments include:

- Event-based tracking

- Segment comparisons

- Conversions

- Traffic source and campaign reporting (with UTM parameters, see below)

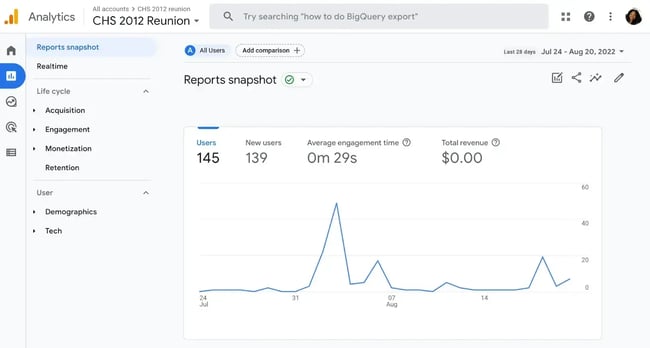

This GA4 snapshot illustrates how teams can analyze user volumes and engagement trends over time to evaluate whether an experiment meaningfully changes site behavior.

What we like: GA4 is widely used, making it a familiar and accessible data source for experiments. It helps teams validate experiment results by tracking user behavior, traffic sources, and conversions without requiring additional setup.

UTM parameters

UTM codes are not software or programs, but an important tool for tracking attribution across platforms and experiments. An Urchin Tracking Module (UTM) code is a small piece of text added to a URL to track the performance of that particular marketing asset.

Price: Free

These codes can contain up to five parameters:

- utm_source

- utm_medium

- utm_campaign

- utm_term (optional, mainly for paid search)

- utm_content (optional, often for A/B testing)

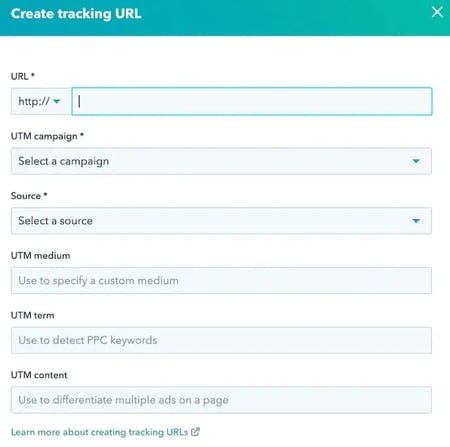

Here is an example from the HubSpot blog:

UTM codes do not replace attribution software like HubSpot. Instead, they work together to improve campaign-level attribution and tracking.

You can easily create a UTM code using HubSpot (see image below, instructions here) as well URL builder for Google Analytics campaigns.

source

What we like: It is not a standalone tool, but UTM parameters are essential to the experimentation process. I like how quick and easy they are to create.

Examples of real marketing experiments

Let’s look at some real marketing experiments: their hypotheses, variations and results. The experiments in this section cover different areas of the sales funnel and are based on real case studies and companies.

Lead qualification and automation

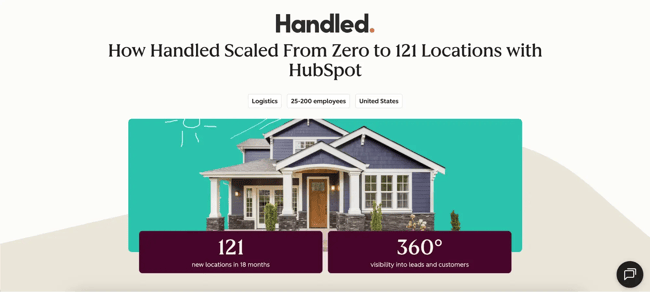

Treated worked with HubSpot to centralize and refine their lead qualification process to improve conversions and sales efficiency in the decision stage of the funnel.

- Hypothesis: By replacing manual coordination with automated workflows, Handled was able to increase lead-to-customer conversion rates and provide a seamless customer engagement experience that manual competitors couldn’t match.

- Variant: Handled moved from fragmented tools to a centralized HubSpot CRM system. They implemented programmable automation to instantly sync logistics data and trigger personalized customer communications as soon as a lead reaches the decision stage.

- Business result: The team achieved a “single source of truth” that allowed them to focus on closing deals instead of manual data entry.

Consider applying this real-world example to your marketing in two ways.

Test lead quality, not just lead volume.

Teams can experiment with form fields, qualification questions, or gated content to check whether fewer but more qualified leads lead to better downstream results. This helps shift experimentation from vanity metrics to revenue impact.

Align messages with sales conversations.

Another experiment to consider is testing landing pages and advertising messages against real sales objections or frequently asked questions. This confirms whether setting clearer expectations improves conversion quality and reduces friction later in the funnel.

Redesign of the mini car

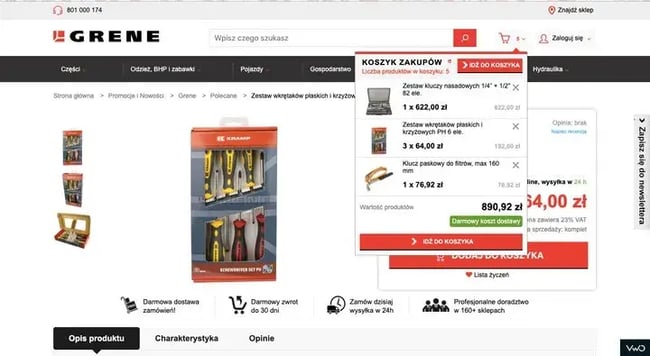

Grene and VWO Services (https://vwo.com/success-stories/grene/) conducted an A/B test on Grene’s mini cart (decision stage of the funnel), which reportedly increased cart page visits, conversions, and purchase quantity.

- Hypothesis: Making the mini cart easier to use (higher CTA, less friction) would increase purchase quantity.

- Variant: Redesigned mini cart with prominent CTA, simplified UI and full product visibility.

- Business result: The redesign resulted in a 16.63% increase in conversion rate and a doubling of average purchase quantity.

The VWO Services case study notes that other changes were also made (and goes into detail about them). Here), But cites the redesign of the mini car as a catalyst.

What we like: In the case study summary, VWO Services noted that certain options were removed from the mini shopping cart design to reduce the likelihood of customers accidentally removing items from their shopping cart. I really like the UX considerations and the ripple effect of simple experiments.

Remove steps from checkout.

Teams can test removing secondary actions from the shopping cart or checkout flow. This experiment validates whether fewer choices lead to more completed purchases without affecting average order value.

Increase the visibility of the primary CTA.

Another simple test is to increase the importance of the primary checkout CTA through size, contrast, or placement. This will help determine whether a clearer visual hierarchy reduces purchase hesitation.

Removal of landing page navigation

HubSpot ran an A/B test removing top navigation from landing pages to see if this improved conversions in the decision stage of the funnel.

- Hypothesis: Removing navigation links/search bar would reduce distractions and put more focus on the primary conversion goal.

- Variant: Landing pages with distant navigation links that draw attention to a single CTA.

- Business result: The test found that removing navigation at the decision stage was most effective, resulting in a 16% to 28% increase in conversion rates for high-intent pages (e.g. demo requests). Interestingly, the change had a much smaller impact on pages in the awareness stage.

Reduce cognitive load at the moment of decision.

Teams can test simplified landing pages to see if fewer choices lead to higher conversion rates. This is particularly effective when the goal is a single action, such as filling out forms or requesting a demo.

Match navigation depth to intent level.

Another idea is to selectively remove navigation only on decision-stage assets, but keep it on awareness or education pages. This helps determine whether focused experiences perform better once users are ready to convert.

Free trial of CTA test

Go and Unbounce ran an A/B test on the homepage CTA to improve conversions in the decision stage of the funnel.

- hypothesis: Changing the call to action from “Sign up for free” to “Try for free” would better communicate value and increase conversions.

- variant: Changed CTA text to highlight a free trial instead of a free plan.

- Business result: The variant resulted in a 104% increase in conversions month-over-month.

What we like: Ah, the power of concentration, cleverness A/B testing. I think this works because the new language makes the value of the premium offering clearer and reduces viewer hesitation.

Test value framing in CTAs.

Teams can experiment with CTAs that prioritize access over engagement. This helps validate which language is better at reducing perceived risk at the decision stage.

Align CTA with product model.

Another simple test is to match the CTA text with how the product actually works, e.g. B. with test versions or previews. This confirms whether setting clearer expectations improves conversions by reducing friction and uncertainty.

Social listening

Rozum Robotics used the social listening tool Awario to strengthen PR and lead generation efforts Rozum Cafe.

- Hypothesis: By monitoring online and social media mentions in real time, the team was able to identify niche audiences and influencers more effectively than traditional research methods.

- Tactics: Implemented brand and competitive monitoring to track industry sentiment, identify relevant influencers in food technology and robotics, and interact with online mentions in real-time.

- Result: The team identified two new target groups, reduced PR research time by 70% and improved lead quality through more targeted targeting.

Audience discovery through social listening.

Teams can repeat this experiment by monitoring brand, competitor, and category keywords to discover unexpected audiences engaged in related topics. This helps verify whether current targeting assumptions match real-world conversations.

Influencer and media identification experiments.

Instead of relying on static media lists, marketers can test social listening to identify journalists, YouTubers, or niche communities that are already discussing related products or issues. This validates whether real-time signals lead to higher quality PR and opportunities.

Examples of marketing experiments by funnel stage

Marketing experiments can target audiences at different points in the customer journey: awareness, consideration, decision, and retention. The following 25 experiment ideas span these four categories and help improve marketing ROI.

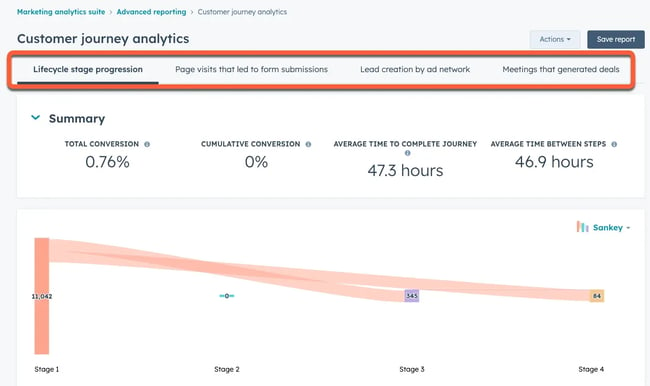

Consider using HubSpot’s advanced reporting tools to visually analyze viewers at different lifecycle stages.

Awareness experiments you can start this week

Experiments to increase awareness focus on brand recognition, first contact and product contextualization. Consider these ideas.

- Cold audience targeting test: Compare broad targeting with AI-suggested segments to see what leads to lower CPMs or higher engagement. AI segment suggestions from HubSpot and Smart CRM Help define and refine the target groups used in the experiment.

- Creative format test (static vs. video): Test whether short video ads outperform static images in terms of reach or impressions. Validates which creative format grabs the attention of a cold audience the fastest.

- Competitor Audience Test “Pain vs. Gain”: Test pain-focused and benefit-focused social media advertising messages when targeting users who follow a competitor to evaluate which framing drives increased engagement with cold audiences.

- Headline framing test (usefulness vs. curiosity): Compare benefit-focused headlines to curiosity-focused headlines in paid social media or display ads. Test which frame gets more engagement from viewers.

- Message framing test: Test brand-focused messaging versus product-focused messaging to enable first-contact engagement. Results can be analyzed using HubSpot Campaign and traffic analysis.

Consideration experiments that increase engagement

Consideration stage experiments focus on improving engagement, building rapport, and communicating product value. Consider these ideas.

- On-page engagement test: Compare static pages with pages with interactive elements. Behavioral event tracking in HubSpot Helps measure scroll depth, clicks and engagement signals.

- Email Nurture Sequencing Test: Test different care pathways for the same segment. Compare text-only emails to design-heavy HTML emails to see differences in engagement.

- Content Format Test (Guide vs. Checklist): Offer the same email opt-in as a longer form eBook versus a short checklist. Checks how much depth viewers want before taking the next step.

- Social Security Assessment Test: Testimonials “above vs. below the fold” on landing pages. Measure scroll depth and time spent on page to increase engagement.

- Lead magnet format test: Test a checklist versus a detailed guide on the same topic. HubSpot reporting (Figure below) shows which asset leads to deeper engagement and assisted conversions.

Experiments in the decision phase that lead to conversions

Decision-stage experiments test messaging, pricing, customer information intake, and retargeting to drive higher conversion rates. Consider these experiment ideas.

- Form length test: Test short vs. qualifying forms to balance conversion rate and lead quality. HubSpots Smart CRM Data helps assess downstream impact beyond the initial conversion.

- CTA intent test: Compare low-engagement CTAs (“Get Started”) with high-intent CTAs (“Book a Demo”).

- Retargeting message test: Serve differently Retargeting ads to users who viewed the prices but didn’t convert.

- Urgency message test: Test countdowns, limited availability or deadline language. Checks whether urgency increases conversions without affecting trust.

- Price page experiment: Test simplified pricing layouts with detailed feature breakdowns. Adaptive testing in HubSpot (Figure below) allows teams to test multiple versions efficiently.

Retention and expansion experiments that improve LTV

Retention and expansion experiments analyze customer onboarding, communication and feedback with the aim of retaining customers for as long as possible. Consider these ideas:

- Lifecycle email timing test: Test when to introduce upsell or cross-sell messages. HubSpot Smart CRM Lifecycle stages ensure users are evaluated consistently.

- Onboarding flow test: Compare a short onboarding sequence to a guided, multi-step experience.

- Time test for customer feedback: Try instant surveys versus milestone-based feedback. Reporting helps link feedback to churn or expansion.

- Personalized loyalty offers: Test personalized incentives based on usage or purchase history.

- Email frequency for product usage: Try sending weekly or bi-weekly emails about educational and product benefits. Evaluates how frequency impacts open rates and click-through rates without causing fatigue.

Easily analyze data with HubSpots Customer journey reporting:

SEO and content experiments for lasting growth

Experiments aimed at improving long-term organic growth, such as SEO and social media content, focus on showing in search results, meeting user needs, and personalizing experiences with your brand.

- SERP feature optimization test: Test FAQ or snippet-friendly formatting. HubSpot analytics help monitor organic performance and engagement.

- Landing page A/B testing: Test two different landing pages that target the same keyword or search intent. Checks whether layout, messaging, or CTA structure improves engagement and conversions from organic traffic without changing rankings.

- Social Post Format Test: Test different social media post formats – e.g. B. Plain text, carousel or short video – if you are promoting the same content. Validates which format leads to higher click-through rates and repeat visits to your own content.

- Content depth test: Compare concise answers with detailed, in-depth guides on the same topic. Validates how depth affects rankings, time on page, and conversion behavior.

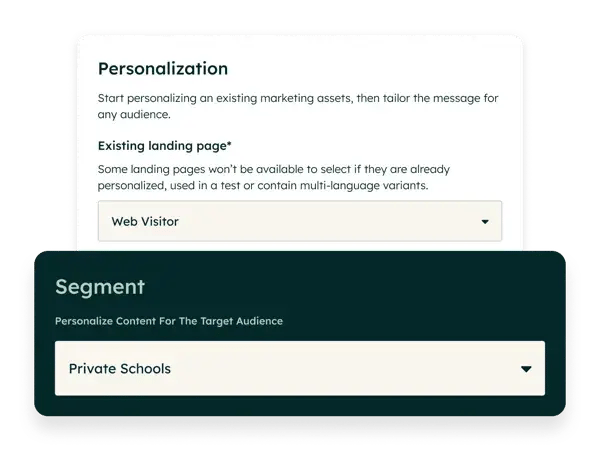

- Personalized landing page experiment: Test personalized landing page content based on visitor segmentation or CRM data against a generic version. This can be done using HubSpot’s AI-powered personalization tools (see image below).

Frequently asked questions about marketing experiments

How long should a marketing experiment run?

The duration of a marketing experiment is determined by the channel and sample size. Experimental paid advertising campaigns can be reviewed weekly, while efforts like organic SEO and organic social media posts can take weeks or months to collect sufficient data.

Can I test more than one variable at the same time?

Testing multiple variables simultaneously, called multivariate testing, is not recommended for beginners because the results are often less meaningful than those of tests such as A/B testing. However, these tests can be effective for measuring interaction effects.

What happens if my marketing experiment doesn’t work?

An inconclusive (or “null”) result is still a win: it proves that the specific change you tested doesn’t significantly affect your audience’s behavior. In this case, marketers should not just try again, but develop a bolder hypothesis.

When should I end a marketing experiment early?

Marketing experiments should be stopped early if there are errors in attribution or analysis, if they produce an extremely negative result, or if external factors (such as national crises, elections, or holidays) affect the results. Avoid canceling tests in the first few days just because they look “bad,” as data often stabilizes over time.

Do I need statistical software to analyze the results?

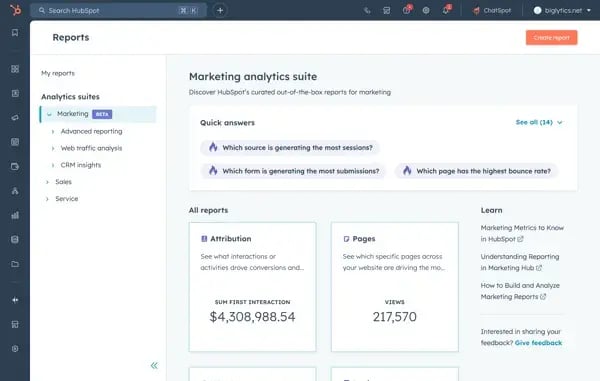

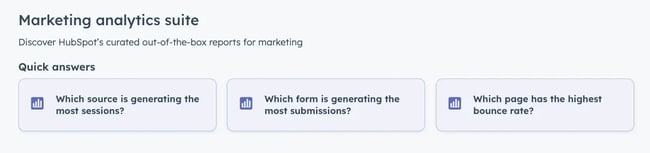

Marketing teams can run experiments without statistical software, but the data still needs to be captured reliably for accurate reporting. Good reporting software not only collects data, but also makes it actionable. For example, HubSpot has advanced marketing reports in the marketing analytics suite that provide quick answers such as “Which form generates the most submissions?”

Next Steps

Experimentation is in the DNA of modern marketing. It helps brands discover more effective marketing messages, promotions and strategies to convert viewers into customers. Done correctly, a brand’s experiments lead directly to business growth.

With built-in experimentation, personalization, and reporting capabilities, HubSpot makes it easier for teams to turn experiments into insights and insights into growth.